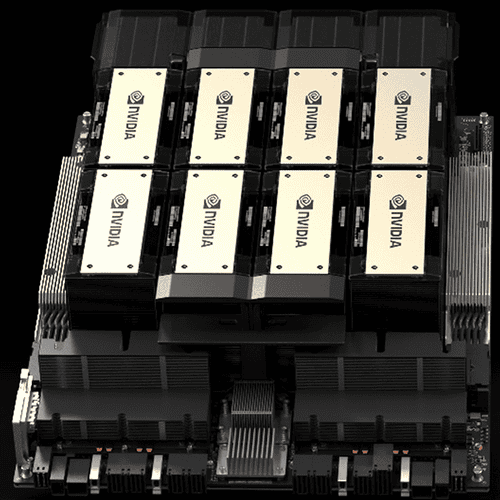

NVIDIA HGX H200

- 141GB of HBM3e GPU memory

- 4.8TB/s of memory bandwidth

- 4 petaFLOPS of FP8 performance

- 2X LLM inference performance

- 110X HPC performance

About

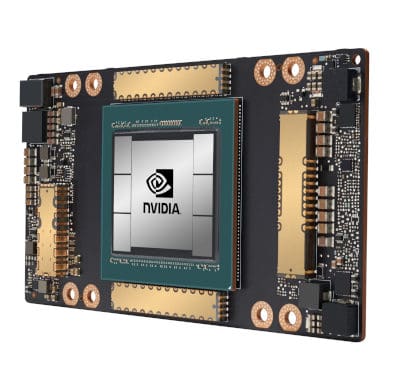

The GPU for Generative AI and HPC

The NVIDIA H200 Tensor Core GPU supercharges generative AI and high-performance computing (HPC) workloads with game-changing performance and memory capabilities. As the first GPU with HBM3e, the H200’s larger and faster memory fuels the acceleration of generative AI and large language models (LLMs) while advancing scientific computing for HPC workloads.

Specification

HGX H200

FP64

34 TFLOPS

FP64 Tensor Core

67 TFLOPS

FP32

67 TFLOPS

TF32 Tensor Core

989 TFLOPS2²

BFLOAT16 Tensor Core

1,979 TFLOPS²

FP16 Tensor Core

1,979 TFLOPS²

FP8 Tensor Core

3,958 TFLOPS²

INT8 Tensor Core

3,958 TFLOPS²

GPU memory

141GB

GPU memory bandwidth

4.8TB/s

Decoders

7 NVDEC

7 JPEG

Confidential Computing

Supported

Max thermal design power (TDP)

Up to 700W (configurable)

Multi-Instance GPUs

Up to 7 MIGs @16.5GB each

Form factor

SXM

Interconnect

NVIDIA NVLink®: 900GB/s

PCIe Gen5: 128GB/s

Server options

NVIDIA HGX™ H200 partner and NVIDIA-Certified Systems™ with 4 or 8 GPUs

NVIDIA AI Enterprise

Add-on

You May Also Like

Related products

-

NVIDIA L40

SKU: 900-2G133-0010-000More InformationThe NVIDIA L40 brings the highest level of power and performance for visual computing workloads in the data center. Third-generation RT Cores and industry-leading 48 GB of GDDR6 memory deliver up to twice the real-time ray-tracing performance of the previous generation to accelerate high-fidelity creative workflows, including real-time, full-fidelity, interactive rendering, 3D design, video streaming, and virtual production. -

NVIDIA A100 TENSOR CORE GPU

SKU: N/AMore Information- GPU Memory: 40 GB

- Peak FP16 Tensor Core: 312 TF

- System Interface: 4/8 SXM on NVIDIA HGX A100

-

NVIDIA T4

SKU: N/AMore Information- GPU Memory: 16 GB GDDR6

- CUDA Cores: 2560

- NVIDIA Tensor Cores: 320

- System Interface: x16 PCIe Gen3

Our Customers

Previous

Next